Proxmox ZFS Tuning: ARC, L2ARC, and SLOG Guide

Learn how to tune ZFS ARC size, add L2ARC SSD caching, and configure SLOG on Proxmox for maximum storage performance. Practical tips for homelabs and production.

On this page

ZFS is one of the best reasons to run Proxmox in your homelab or on-prem infrastructure. It gives you data integrity, snapshots, compression, and replication out of the box. But the default configuration is conservative — designed to work on anything from a Raspberry Pi to a enterprise server. If you have spare RAM, an NVMe drive, or a dedicated write log device, you're leaving significant performance on the table.

This guide walks through the three most impactful ZFS tuning levers on Proxmox: the Adaptive Replacement Cache (ARC), the Level 2 ARC (L2ARC), and the Separate Intent Log (SLOG). We'll cover what each one does, when to use it, and exactly how to configure it.

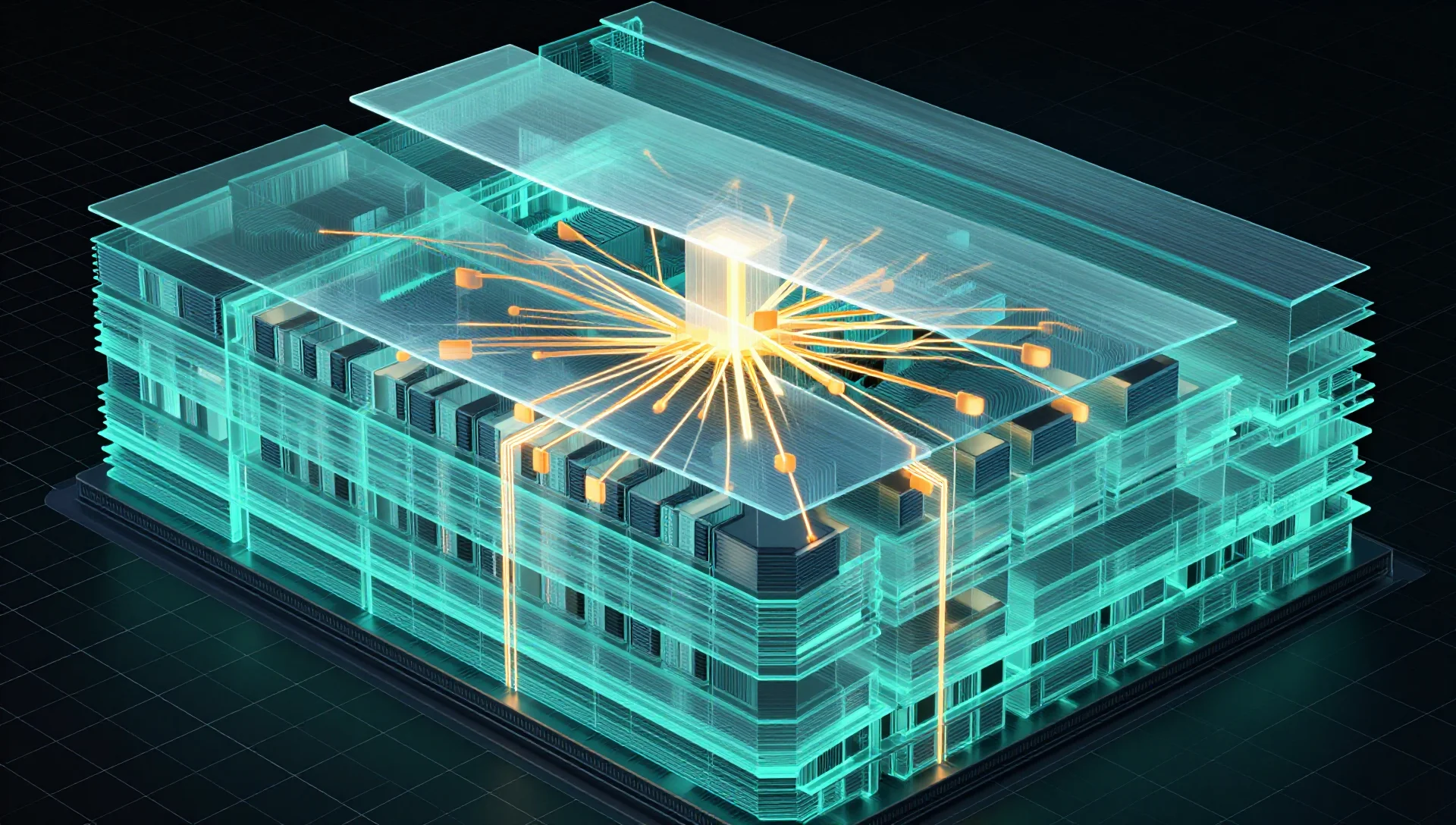

Understanding ZFS Caching Architecture

Before touching any settings, it helps to understand how ZFS uses storage tiers.

ZFS has a layered caching model:

- ARC — In-RAM read cache. The fastest tier. All ZFS reads go through ARC first.

- L2ARC — A secondary read cache on a fast SSD or NVMe. Spills over from ARC.

- SLOG (ZIL device) — A dedicated write log device that accelerates synchronous writes. Does not cache reads.

- Main pool — Your actual storage (HDDs, SSDs, or mixed).

Getting these right for your hardware profile is the difference between sluggish VM disk I/O and a snappy, responsive system.

Tuning the ARC (Adaptive Replacement Cache)

The ARC lives entirely in RAM. ZFS will use as much memory as you allow — by default, up to half of total system RAM on Linux. On a Proxmox host with 64 GB RAM, that means ZFS might claim 32 GB just for disk cache.

For a pure storage node, that's fine. For a Proxmox hypervisor running VMs and containers, you need to leave RAM headroom for your guests.

Check Current ARC Usage

bash arc_summary

If arc_summary isn't installed:

apt install sysstat

# Or read directly:

cat /proc/spl/kstat/zfs/arcstats | grep -E '^(size|c_max|c_min|hits|misses)'

The key values:

size— Current ARC size in bytesc_max— Maximum allowed ARC sizehits/misses— Cache hit ratio (aim for >90% hits)

Set ARC Min and Max Limits

Create a ZFS module config file:

nano /etc/modprobe.d/zfs.conf

Add limits in bytes. For a 64 GB host running VMs, a reasonable starting point:

Minimum ARC size: 4 GB

options zfs zfs_arc_min=4294967296

Maximum ARC size: 16 GB

options zfs zfs_arc_max=17179869184

Calculate your values:

# 8 GB in bytes

python3 -c "print(8 * 1024**3)"

# Output: 8589934592

Apply without rebooting:

echo 17179869184 > /sys/module/zfs/parameters/zfs_arc_max

echo 4294967296 > /sys/module/zfs/parameters/zfs_arc_min

Update initramfs so the setting persists after reboot:

update-initramfs -u -k all

ARC Sizing Guidelines

| Host RAM | VMs/Containers | Recommended ARC Max |

|---|---|---|

| 16 GB | 2–4 VMs | 4–6 GB |

| 32 GB | 4–8 VMs | 8–12 GB |

| 64 GB | 8–16 VMs | 16–24 GB |

| 128 GB | 16+ VMs | 32–48 GB |

If your workload is read-heavy (NFS, media streaming, databases with warm caches), push ARC higher. If it's write-heavy or mostly fresh data, extra ARC won't help much.

Adding L2ARC: SSD Read Cache

L2ARC extends your read cache onto a fast SSD or NVMe. When data gets evicted from RAM-based ARC, ZFS can promote it to L2ARC instead of discarding it. Subsequent reads pull from L2ARC rather than hitting spinning disks.

When L2ARC Actually Helps

L2ARC is worth adding if:

- Your pool uses HDDs and you have a spare SSD

- Your working dataset is larger than your ARC

- Read hit rates are below 80% under normal load

- You're running databases, media servers, or NFS with repeated access patterns

L2ARC does not help if:

- Your entire working dataset fits in ARC already

- Your workload is 100% sequential writes (backups, logging)

- Your pool is already all-SSD or NVMe

L2ARC RAM Overhead

This is the part most guides skip: L2ARC metadata is stored in ARC. Each GB of L2ARC consumes roughly 70–100 MB of ARC for its index headers. A 500 GB L2ARC device could eat 35–50 GB of your RAM just for metadata.

For homelabs, keep L2ARC devices under 200–300 GB unless you have abundant RAM.

Add a Device as L2ARC

Identify your SSD:

lsblk -o NAME,SIZE,ROTA,MODEL

# ROTA=0 means SSD/NVMe

Add it to your pool as a cache device:

# Replace 'rpool' and '/dev/sdc' with your pool and device

zpool add rpool cache /dev/sdc

Verify it's active:

zpool status rpool

You should see a cache section listing the device.

Monitor L2ARC Hit Rate

cat /proc/spl/kstat/zfs/arcstats | grep l2

Key fields:

l2_hits— Reads served from L2ARCl2_misses— Reads that missed L2ARC and went to diskl2_size— Current L2ARC data size

After a warm-up period (hours to days depending on workload), aim for L2ARC hit rates above 50% on top of your ARC hits.

Remove L2ARC Device

L2ARC is not a persistent cache — removing it loses no data, only cached reads:

zpool remove rpool /dev/sdc

Configuring SLOG (ZFS Intent Log)

SLOG is commonly misunderstood. It does not cache reads. It accelerates synchronous writes by providing a fast, durable landing zone for ZFS's intent log.

How the ZFS Intent Log Works

Every synchronous write (NFS, iSCSI, databases, VMs with sync=always) waits for data to be committed to stable storage before returning. Without a SLOG, ZFS writes to the main pool and then acknowledges the write — slow on spinning rust.

With a SLOG, ZFS writes to the fast SLOG device and immediately acknowledges the write. The data is later flushed to the main pool in bulk. The SLOG only needs to survive a power loss long enough to replay on next boot.

When SLOG Helps

- NFS shares with

sync=on(the default) - iSCSI targets

- Database VMs with sync I/O (PostgreSQL, MySQL, etc.)

- Any workload where

iostatshows highawaiton write operations

SLOG does not help for:

- Async workloads (VMs with

sync=disabledin Proxmox storage config) - Pure read workloads

- Workloads already bottlenecked by CPU or RAM

SLOG Device Requirements

The SLOG device must have:

- Low write latency — Under 100µs is ideal (NVMe optane, enterprise SSD)

- Power-loss protection — Consumer NVMe drives without capacitor-backed cache can corrupt SLOG on power loss. Use enterprise drives or a UPS.

- High endurance — SLOG gets hammered with writes. Use a drive rated for high write workloads (DWPD ≥ 3).

A cheap consumer SSD as SLOG can be worse than no SLOG if it lacks power-loss protection.

Add a SLOG Device

# Add mirrored SLOG for redundancy (recommended for production)

zpool add rpool log mirror /dev/nvme1n1 /dev/nvme2n1

Single SLOG (acceptable with UPS protection)

zpool add rpool log /dev/nvme1n1

Always mirror SLOG in production. A failed SLOG doesn't cause data loss, but it will stall synchronous writes until you replace it.

Verify:

zpool status rpool

# Should show a 'logs' section

Proxmox Storage Sync Setting

In the Proxmox web UI under Datacenter → Storage, the sync option controls whether VM disk writes are synchronous:

sync=standard— Inherits from ZFS dataset default (usually async)sync=always— Forces sync writes (maximum safety, needs SLOG)sync=disabled— Async writes (fastest, risk on power loss)

For VMs on a pool with a good SLOG and UPS, sync=always gives you both safety and performance.

ZFS Compression: Free Performance

While not strictly caching, ZFS compression deserves mention here because it effectively increases both I/O throughput and ARC capacity.

# Enable lz4 compression on a dataset (fast, high ratio)

zfs set compression=lz4 rpool/data

Check current compression ratio

zfs get compressratio rpool/data

lz4 is the right default for most workloads — CPU overhead is negligible on modern processors and ratios of 1.5x–3x are common for VM images and text-heavy data.

zstd compresses better but uses more CPU. Use it for archival datasets, not active VM storage.

Proxmox-Specific ZFS Recommendations

Separate OS and VM Storage Pools

Don't run your Proxmox OS root (rpool) and VM storage on the same pool unless you have no choice. Noisy VM disk I/O degrades system responsiveness.

Create a dedicated pool for VMs:

# Example: 4-drive raidz2 for VM storage

zpool create vmdata raidz2 /dev/sda /dev/sdb /dev/sdc /dev/sdd

Add it as storage in Proxmox

Datacenter → Storage → Add → ZFS → select vmdata

Adjust recordsize for VM Workloads

The default ZFS recordsize is 128K. VM disk images with random 4K I/O perform better with smaller records:

# For VM disk datasets

zfs set recordsize=64K vmdata/vm-disks

For databases (PostgreSQL, MySQL)

zfs set recordsize=8K vmdata/db-storage

For NFS media / backups (large sequential)

zfs set recordsize=1M vmdata/backups

This only affects new writes — existing data uses the recordsize it was written with.

Disable atime for VM Storage

zfs set atime=off vmdata

Access time updates on every file read generate unnecessary write amplification. There's no reason to track atime on VM disk images.

Monitoring and Ongoing Tuning

ZFS tuning isn't set-and-forget. Monitor regularly:

# Real-time pool I/O stats

zpool iostat -v 2

ARC stats summary

arc_summary | head -40

Check for slow disks or errors

zpool status -v

Install zfs-auto-snapshot if you want automated snapshots without manual scheduling:

apt install zfs-auto-snapshot

And periodically run scrubs to verify data integrity:

# Start a scrub

zpool scrub vmdata

Check scrub status

zpool status vmdata

Schedule weekly scrubs with a cron job:

echo "0 2 * * 0 root /sbin/zpool scrub vmdata" > /etc/cron.d/zfs-scrub

Conclusion

ZFS tuning on Proxmox comes down to matching your configuration to your hardware and workload. Set ARC limits so VMs have the RAM they need. Add L2ARC only when your working dataset exceeds available RAM and you have a spare SSD. Deploy SLOG on enterprise-grade NVMe when synchronous write latency matters — and back it with a UPS. Enable lz4 compression everywhere, tune recordsize to your I/O pattern, and scrub regularly.

Start with ARC tuning since it's zero-cost and immediately impactful. Add caching tiers incrementally, measure before and after with zpool iostat and arc_summary, and resist the urge to throw hardware at a problem that's actually a misconfiguration. With the right settings, a ZFS pool on Proxmox can rival enterprise SAN performance at a fraction of the cost.